What do you mean I'm not the best prompt engineer on the internet?

A guide to what prompt engineering really is, but how to make users feel like they're prompt legends anyway.

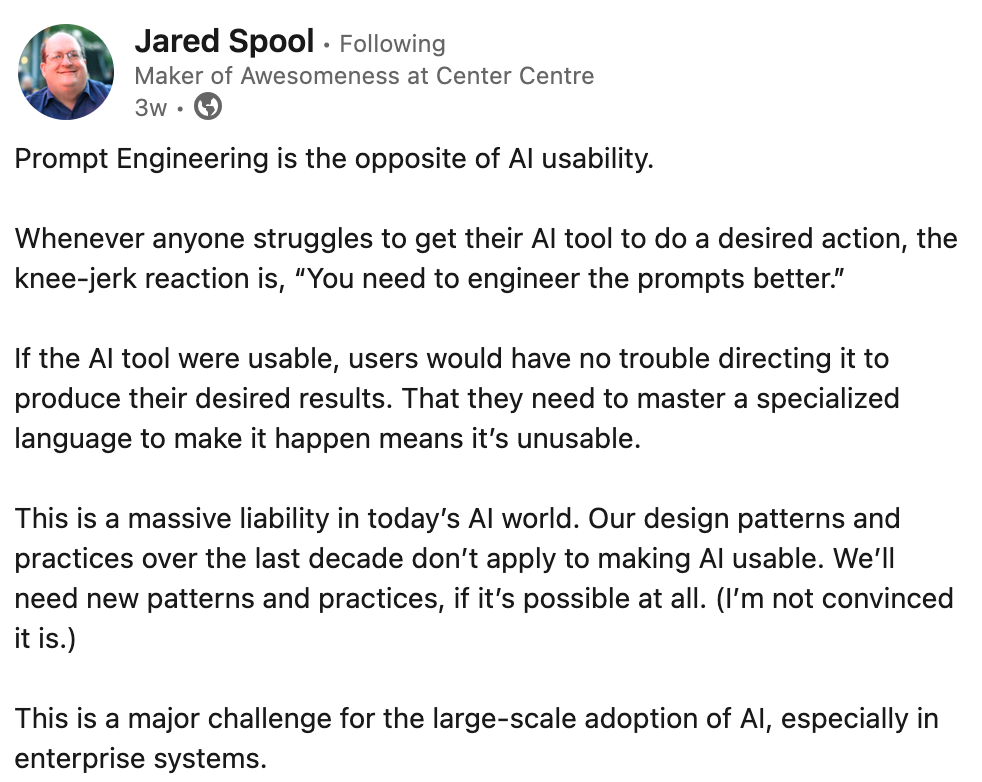

Jared Spool recently posted on LinkedIn that “Prompt Engineering is the opposite of AI usability” and that it all comes down the basic sentiment that if it were so usable, users shouldn’t need adapt to make AI work for them.

Close—but the underlying premise is incorrect. It conflates prompting (what people type) with prompt engineering (the product layer that translates messy intent into reliable output).

So, then, what the heck is “prompt engineering” and what does it have to do with UX?

As IBM frames it, prompt engineering "bridge[s] the gap between raw queries and meaningful AI-generated responses.”

Prompt engineering = the middle layer between input and output.

Prompt engineering ≠ users typing perfect phrases into a chat box.

Prompt engineering lives under the hood: system instructions, tool calls, retrieval, guardrails, evals, and UX patterns. Things that make average users feel like naturals without teaching them to “speak AI.” Your job as a UX practitioner isn’t to train users to be prompt wizards—it’s to design interfaces that remove the need for such wizardry.

The misconception

Myth: “We need to teach users how to craft expert prompts.”

Reality: Users don’t want homework. Your product should absorb the complexity via design, orchestration, and sensible defaults. When the UX is right, casual language or a couple clicks are enough.

What “real” prompt engineering covers (beyond the input box)

- System & role instructions: Invisible guardrails for goals, tone, and scope.

- Orchestration & tool use: When to call calculators/APIs and how to stitch results.

- Retrieval & grounding (RAG): Feed trusted sources at answer time so outputs stay anchored.

- Guardrails & policy: Filters, refusals, and safe fallbacks for ambiguous asks.

- Evaluation & observability: Tests and metrics that prevent behavior drift.

- UX patterns: Interfaces that make average users feel like naturals.

None of that lives in the user’s head. It lives in the product.

Why UX should care (and not panic)

You might be thinking. You're wrong! Prompt engineering IS typing magic phrases in the box. It's true. People will keep using “prompt engineering” to mean “whatever you type.” Fine—let’s roll with it. But still, it isn’t anti-usability. Looking at what was discussed, it's safe to say, prompt engineering is a UX practice. It's the craft of making AI accessible.

And there are additional things a UX designer can do to continue to help user's get the output they want right away.

- Good UX lowers the “prompt burden.” Clear affordances, structured inputs, and smart defaults reduce how much the user needs to type.

- Natural language ≠ natural behavior. People don’t want to write essays; they want to pick, tweak, and confirm. Your UI should meet them there.

- Trust is a design outcome. Grounding, citations, and transparent states (drafting → checking → done) matter more than teaching users fancy phrasing.

- Accessibility & equity. If success depends on “knowing the right words,” you’ve created a skill gate. Inclusive design removes that gate.

Patterns that make “prompting” disappear

- Task-first flows: Suggestion tiles like Summarize/Rewrite/Translate/Extract; users pick intent, the system builds the prompt.

- Inline affordances: Hover actions (friendlier, fix grammar, bullets); micro-intents beat macro-prompts.

- Structured prompts: Small fields (Audience, Tone, Length, Must-include); constraints > verbosity.

- Templates & snippets: Saved and customizable templates or prompts that help the user get moving.

- Context chips: Offer inline examples and “Try this” chips. Don’t ship a 30-page “Prompting 101.”

- Result-first editing: Generate → diff → tighten/expand/add sources; people react better than invent.

- Progressive disclosure: Simple default path; optional “Prompt Lab” for power users.

Potential Examples

1) Help desk

- Bad: “Describe your issue to the AI.”

- Better: Buttons that suggest helpful actions, such as Can’t log in, Billing question, Bug report → dynamic form → hidden prompt embeds ticket metadata.

- Outcome: Faster, cleaner intents; higher first-response accuracy.

2) Policy writing assistant

- Bad: Blank chat and “Ask me to draft a policy.”

- Better: Template chooser + fields (scope, jurisdiction, audience) + context chips (existing policy, legal refs) + result with citations.

- Outcome: Standardized output; measurable compliance.

3) Code review copilot

- Bad: “Paste code and ask for feedback.”

- Better: Repo-aware side panel with quick actions: “Find risky diffs,” “Add tests,” “Explain complexity hotspots,” with a visible checklist the model must address.

- Outcome: Consistent reviews, less guesswork, fewer false positives.

A practical checklist for UX teams

- Define tasks first. What top 5 jobs-to-be-done should one click away?

- Minimize blank states. Provide examples, templates, and quick actions.

- Show the scope. Make grounding sources and constraints user-visible.

- Prefer constraints over prose. Sliders, toggles, and fields beat long prompts.

- Design for correction. Make it trivial to refine, regenerate, and compare.

- Instrument and evaluate. Log intents, success criteria, and failure modes you can test against later.

- Offer an escape hatch. Advanced users can view/edit the underlying instruction (without forcing it on everyone).

AI terms you should also know:

- System prompt: Invisible instructions that define behavior and boundaries.

- RAG (retrieval-augmented generation): Feeding trustworthy documents to the model at answer time.

- MCP (Model Context Protocol): Lets AI models securely discover and talk to external tools and data in a consistent, portable way.

- Agentic / Agentic AI: AI systems that plan, take simple actions for the user (e.g., call tools), observe results, and iterate toward a goal with minimal supervision.

- Generative AI: Models that create new content (text, images, code) from patterns learned in data.

- Machine Learning (ML): When the system learns from itself to identify patterns from the data.

- Hallucinations: Confident but incorrect outputs produced by a model without adequate grounding.

- Context: The information given to a model at answer time—system prompt, user input, retrieved docs, tool results.

- LLM (Large Language Model): A neural model trained on massive text to predict tokens and generate coherent language.

- Natural Language Processing (NLP): Techniques for analyzing, understanding, and generating human language with computers.

- Copilot: An in-app assistant that suggests, explains, or automates steps while the human stays in control.

Reality check: soonish 👀

Rising AI literacy will make prompt training—and most specialized prompt engineering— (plus this post) largely obsolete.

The bottom line

Prompt engineering isn’t a user skill; it’s a product responsibility. Prompt engineering is a form of UX. And that role is to translate messy, real-world goals into clean intents, provide scaffolding that makes average inputs produce excellent outputs, and keep the heavy lifting—prompt composition, retrieval, tools, and safety—behind the scenes.

If your team is asking, “How do we train users to prompt better?” flip it to: “How do we design the product so they don’t have to?”